Dr. Asyikeen Azhar

Chief Technical Officer

Designing creative AI with boundaries, judgment, and humans in the loop.

building AI in the creative space feels different. even as someone who isn’t a “creative” by trade, that space has always felt sacred to me. creative work is a reflection of the human psyche. it captures how people think, what they care about, what the moment in time feels like. you can see this across history. when photography first emerged in the late 1800s, painters suddenly didn’t need to focus on perfect realism anymore. movements like impressionism emerged instead. artists began painting how a scene felt rather than exactly how it looked.

when tools changed, human expression evolved with it. but the soul of the work was still human. every creative piece carries a fingerprint of the person behind it. you can feel it even in something as simple as text. a book is just words on paper, but the voice of the author still comes through - in the rhythm of the sentences, the choice of words, the way ideas are framed. the same message can feel completely different depending on who wrote it.

AI today is very good at mimicking those patterns. it can reproduce tone, style, even creative structure surprisingly well. but the real question isn’t whether AI can do it. the question is whether it SHOULD, and what we lose if it does. that’s the part that can, but shouldn’t be automated.

so when we started building KLARva, we were very intentional about protecting that space. AI can support the thinking. it can analyse patterns, surface opportunities, and reduce the heavy lifting that happens before something gets created. but taste, instinct, and creative edge should still belong to the human. this belief shapes how we design the product.

once that philosophy was clear, the next question became more practical:

how much control should the AI actually have?

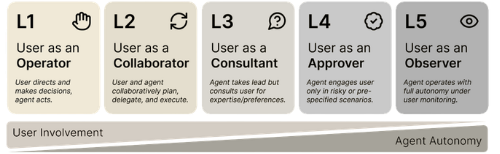

one useful way to think about this is through the levels of autonomy for AI agents, a framework proposed by researchers at the Knight First Amendment Institute.

the framework describes autonomy based on the role a human plays when interacting with an AI system, ranging from operating the system directly to simply observing its actions. in other words, autonomy isn’t just about what the AI can do. it’s about how much decision power the human lets go.

this idea has since influenced governance discussions around agentic AI, including frameworks such as the Model AI Governance Framework for Agentic AI published by the Singapore Government’s Infocomm Media Development Authority (IMDA) on 22 January 2026. it’s the first governance framework created specifically for AI agents that can autonomously plan, reason, and act. in their framework, the autonomy spectrum is simplified into four levels.

where KLARva sits on that spectrum is not accidental, it’s a design decision. here’s how we implement it, using that framework as a guide.

---------------------------------------

Using this framework, KLARva naturally fits the first level: agent proposes, human operates. the system generates strategy and recommendations - video concepts, hooks, captions, publishing times, sounds. but the human decides what actually happens next. ideas can be dragged onto a content calendar, edited, discarded, or partially reused. nothing is executed automatically. the system never publishes content, spends marketing budgets, or takes irreversible actions. execution always stays with the human.

this design choice is deliberate. in creative work, automation should support decisions, not replace them. but KLARva doesn’t stop at level one. it also operates within the second level of the framework: agent and human collaborate.

every action taken by the user becomes a signal. ideas that are discarded tell the system something. concepts that are edited reveal creative preferences. recommendations that are scheduled indicate what resonates. over time, these interactions influence future recommendations. the system improves not just from historical data, but from human judgment inside the workflow.

in that sense, KLARva isn’t just generating ideas. it’s learning alongside the strategist. the AI proposes while the human sharpens.

in creative systems, taste is the signal that matters most.

another key idea in the governance framework is bounded autonomy - defining clear limits on what an AI agent is allowed to do. not every system should be fully autonomous. in many cases, the responsible choice is to constrain the system so it operates within clearly defined boundaries.

for KLARva, this principle is straightforward. the AI does not execute actions outside the platform. instead, the system focuses on what it does best: analysing patterns, surfacing opportunities, and generating strategic recommendations. execution always remains under human control.

this boundary is intentional. in creative work, decisions carry nuance - brand tone, cultural context, timing, instinct. those are things algorithms can support, but not fully own. so KLARva assists the thinking process while leaving the final call to the human.

AI helps with the preparation while humans decide what goes live.

another principle in the governance framework is transparency - users should understand when AI is involved and what role it plays. in practice, this means the system shouldn’t behave like a black box.

for KLARva, the AI’s role is clear. the system analyses patterns, identifies emerging trends, and generates strategic options for content. but these outputs are surfaced as recommendations, not decisions. users can review them, adapt them, combine ideas, or discard them entirely. the process remains visible and controllable throughout the workflow.

this transparency matters for two reasons. first, it builds trust. when outputs are visible, AI becomes a tool for thinking rather than an opaque engine producing answers. second, it preserves creative ownership.

AI can suggest the direction while the human defines the voice.

another important governance principle is ensuring AI systems can learn responsibly from human interaction. in KLARva, user behaviour forms an important feedback loop. when ideas are edited, scheduled, or discarded, those actions become signals. over time, they help refine how future recommendations are generated. instead of relying purely on historical data or static models, the system continuously adapts based on how strategists actually work.

this keeps the AI aligned with human taste, brand context, and real-world decision making, rather than blindly optimising patterns in data. in other words, the system improves not just from data, but from judgment expressed inside the workflow.

a final principle emphasised in the governance framework is auditability - the ability to trace how decisions and outputs are produced. as AI systems become more embedded in everyday workflows, it becomes increasingly important to understand how an outcome came to be. for KLARva, recommendations and interactions remain visible within the workflow.

ideas can be reviewed, edited, discarded, or scheduled, forming a traceable history of how content strategies evolve over time. this allows teams to understand not just what decision was made, but how the thinking behind it developed. in creative work, this kind of traceability matters. it allows teams to revisit ideas, understand why certain directions worked, and refine their approach.

AI becomes part of the thinking process, not a hidden engine producing answers. intelligence should not just be powerful, it should also be understandable.

---------------------------------------

ultimately, AI in creative work shouldn’t be about replacing humans. it should be about sharpening them.

good AI systems don’t remove judgment, they make space for better judgment.

KLARva was built with that belief. AI handles the heavy thinking - patterns, signals, strategy. humans bring the taste, instinct, and creative edge. because in the end, that human fingerprint is still the thing people connect with.

the real question isn’t whether AI can generate creative work. the question is how much of that space we want to give it. how should we draw the line between AI assistance and human creativity?

Strategy without systems doesn’t scale. Systems without strategy don’t make sense.

We build, and think, at the intersection.